Today's edge devices ship with static AI models, fixed at the moment of training and unable to adapt as real-world conditions shift.

When performance degrades, the only option is a costly loop: collect data, send it to the cloud, retrain, push an update, and hope conditions haven't changed by the time it arrives. This process takes hours to days, costs thousands per cycle, and is often impossible where bandwidth is limited or data can't leave the device.

The architecture behind this was built for a different era. It's slow, power-hungry, and impossible to scale. The intelligence is always a step behind the world it's supposed to understand.

The Energy-Intelligence Gap

AI training requires watts of GPU power. For battery-operated edge hardware, that makes on-device learning physically impossible.

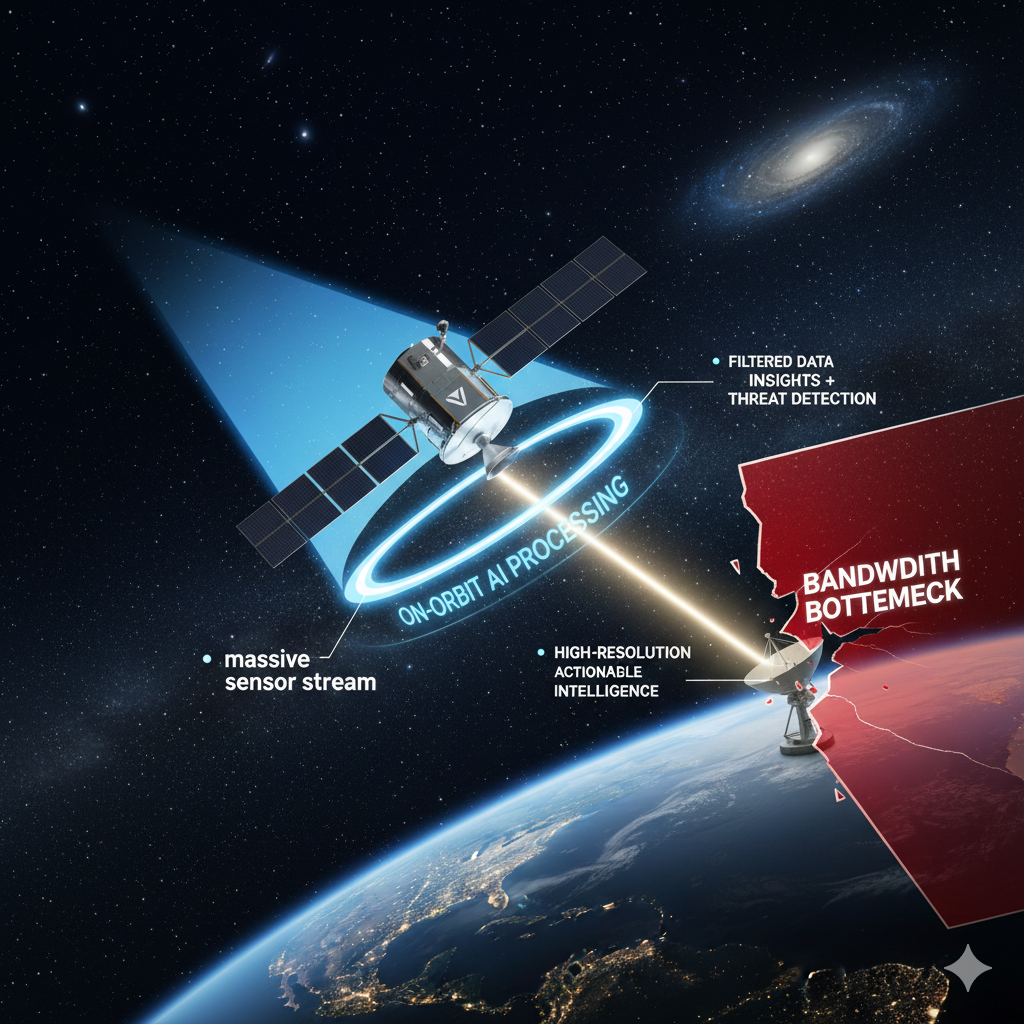

The Bandwidth Bottleneck

Updating a static model means transmitting raw data to the cloud, creating latency, driving up infrastructure costs, and exposing sensitive data to security risks.

.png)

.svg)

.svg)

.svg)

.svg)

.png)

.svg)

.png)

.svg)

.svg)